Zroot/containerd/10 104M 175G 156M legacy The full listing of the lightly used node actually looks like this: $ zfs list It turns out that containerd takes full advantage of ZFS and creates a lot of volumes. Zroot/containerd 744M 175G 216K /var/lib/containerd/io.1.zfs NAME SIZE ALLOC FREE CKPOINT EXPANDSZ FRAG CAP DEDUP HEALTH ALTROOT The resulting ZFS setup looks like this: $ zpool list Without this, the volume will eventually expand to its maximum size in the pool, regardless on how much data is actually used by the ext4 filesystem. This enables the TRIM command on the filesystem, and makes it so that ext4 tells the zpool when blocks become unused. Note that we use the discard option for mount. failed to create containerd task: failed to create shim: failed to mount rootfs component. The errors in the containerd logs look like this. The problem is that Kubernetes uses containerd, which in turn uses overlayfs, which doesn’t work on ZFS. We’re using Kubernetes 1.21.6 with containerd 1.5.7 on NixOS 21.05. In practice, we have to jump through a couple of hoops first. Ideally, we’d want Kubernetes to just use some part of zroot/nixos and Longhorn (the storage provider) to use the entirety of the zdata pool. In other words, there’s no additional RAID, volume management, or encryption setup to do.

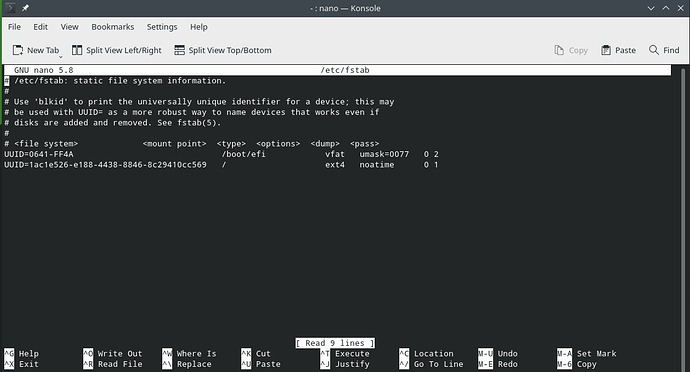

In this setup, ZFS replaces the entire mdadm/LVM/ cryptsetup/ ext4 stack. # zfs create -o mountpoint=legacy zroot/nixos

The zdata pool is on the slow spinning disks, and it will contain the zdata/longhorn-ext4 volume. That’s not going to use up all the 225G available, and we’re going to put the zroot/containerd dataset on it later. The zroot pool is on the fast disks, and the zroot/nixos dataset is the root filesystem. The first few gigs on each disk is used for the mirrored bootloader, and we add the rest to two ZFS pools. The former are the root filesystem, and the latter are used for slow data. The cheap server I got from Hetzner’s server auction has two 225G SSDs and two 1.8T HDDs. It was mostly painless, but there were a few places that required special configuration. ZFS_CMD= "mount -t zfs " else # If it's not a legacy filesystem, it can only be a # native one.I just switched some of my Kubernetes nodes to run on a root ZFS system. Mountpoint= " " fi fi if # Should this actually happen here, I dont think we should be trying to automatically mount this Then if [ " $fs " != " $ " else # Last hail-mary: Hope 'rootmnt' is set! Mountpoint= $(get_fs_value " $fs " org.zol:mountpoint ) if [ " $mountpoint " = "legacy " -o \ Check the 'org.zol:mountpoint' property set in # clone_snap() if that's usable. If then # Can't use the mountpoint property.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed